A massive corporation with wide and deep influence over its users, Facebook must wield its power carefully. It does have the ability to sway elections, so it should mind its gates with extreme caution.

Kathleen Bartzen Culver is an assistant professor in the University of Wisconsin-Madison School of Journalism & Mass Communication and director of the Center for Journalism Ethics.

When I work with young journalists trying to develop a grounding in ethics, I tell them the word is often misunderstood. “I don’t really have a rubber stamp to say something is or is not ethical,” I say, arguing instead that ethics is about reasoning and coming to justifiable conclusions in difficult cases.

And then, I say, there are some “well, duh” kinds of cases, when we ought not even use the word “ethics.”

Plagiarism is a good example. No reasoning needed. Stealing others’ content and playing it off as your own isn’t a choice requiring ethical reasoning. It’s wrong.

When Facebook did an about-face at the end of last year and introduced new means to combat fake news proliferating on its platform, I saw it as something of a “well, duh” case. Allowing blatantly false stories to trend high on the world’s most powerful social network is wrong.

Expanding the responsibility ranks

Facebook is fond of defining itself out of the “news media” business, emphasizing that it is a platform, not a content provider. This is true enough. But with nearly 1.8 billion active monthly users, a platform need not be producing or editing content to wield tremendous influence in a society. Because of this, Facebook has a corporate social responsibility – an ethical obligation – to address patently false information.

Mark Zuckerberg’s initial resistance to accepting the mantle of dealing with this problem had three main components.

First, he emphasized that 99 percent of news content on the platform was “authentic,” leaving the impression that the remaining 1 percent comprising so-called “fake news” (really a toxic stew of hoaxes and wild conspiracy theories) was some tiny figure. But combine that 1 percent with the billions of users, and you come away with a different impression.

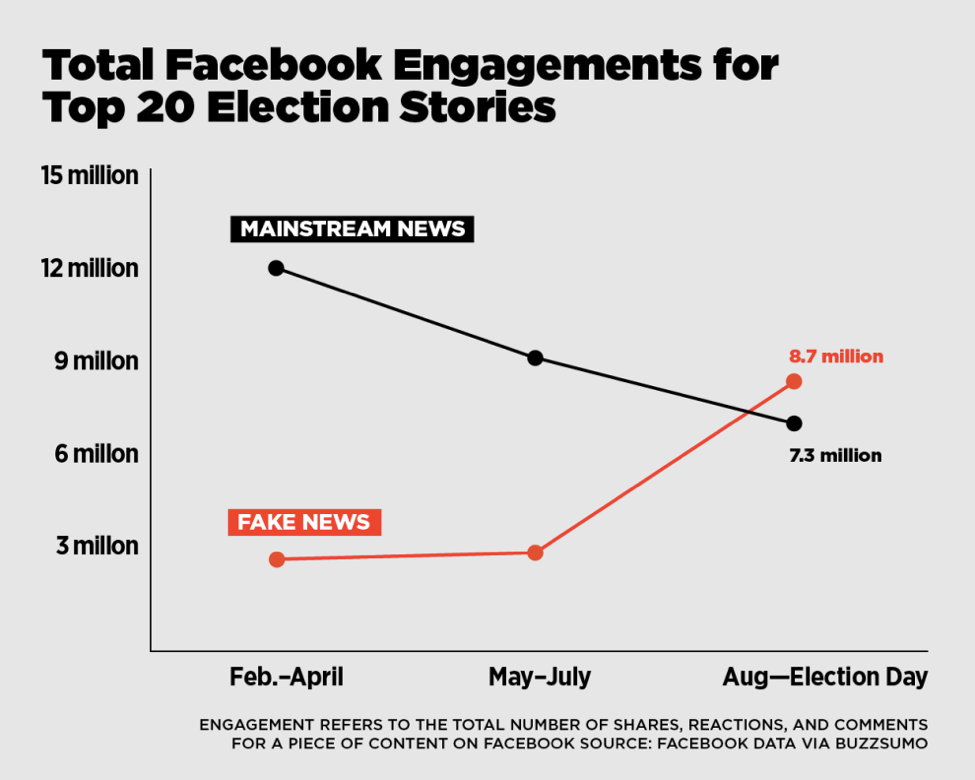

More importantly, BuzzFeed’s Craig Silverman provided a far more important analysis, showing top fake news stories involving the election surpassed top real news stories on engagement – such things as shares, comments and reactions.

If the 1 percent of news stories Facebook acknowledges as fake outperform real news, the relative weight of their impact increases.

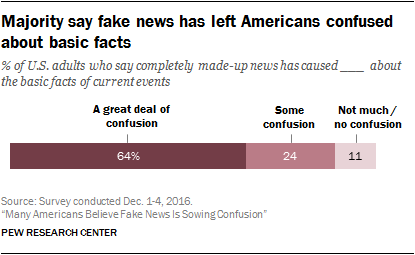

Pew Research Center

The second strand of Zuckerberg’s argument was that fake news was unlikely to have swayed the U.S. presidential election in Donald Trump’s favor. This is a seriously problematic framing. First, we have no real way of knowing, so it’s important to refrain from speculating both that fake news did affect the outcome and that it didn’t.

But even more critically, we have to commit ourselves to defending the very concept of ascertainable truth, regardless of outcomes. Take a football game, for example. It’s almost always exceedingly difficult to directly demonstrate that poor referee calls determine the outcome of a game (except the one time my Green Bay Packers fell victim to a legendary blown call). Yet blown calls directly affect the integrity of the game.

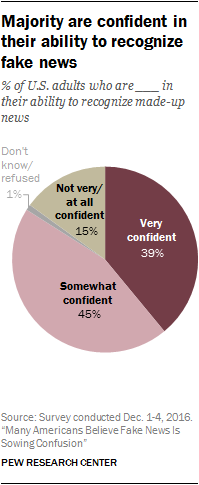

Pew Research Center

Our information environment is analogous, yet far more consequential. Misinformation, disinformation, hoaxes and conspiracy theories pollute that environment and threaten our abilities to govern ourselves effectively.

This leads to Zuckerberg’s third point, that Facebook staffers should not be the arbiters of truth. He is exactly correct that they should not. A massive corporation with wide and deep influence over its users, Facebook must wield its power carefully. It does have the ability to sway elections, so it should mind its gates with extreme caution.

But there is a clear and serious difference between debatable questions and matters of pure truth or falsity. Some liberal partisans would say a story from a conservative outlet claiming Hillary Clinton’s tax plan would damage the economy is false. Facebook has no business intervening in this debatable question. But when a hoax site claims the Pope has endorsed Donald Trump when, in fact, he did not? This is patently false and should not appear on the platform, much less in its trending stories.

What now?

The changes now underway at Facebook are positive. They rely in part on the power of crowds, offering users the opportunity to report a post as fake. But then a crucial second step reduces the possibility that motivated partisans could take down real news by collaborating to label it fake. In this step, the platform is turning to the International Fact-Checking Network, coordinated through the Poynter Institute.

These fact-checkers will not only determine whether a story is false, but also link to debunking information. This sourcing is required, meaning vetted information will be attached to all stories labeled false.

The labeling itself has key elements. First, stories will be highlighted with red warnings that a post has been disputed. Second, these stories cannot be shared without a user seeing a second warning that the post is questionable. Finally, no story debunked by the network can be converted into an ad or promoted post.

Algorithmically, such posts will be ranked lower in news feeds. And Facebook also is deploying plans to gut the financial incentives some posters have in spreading fake news, a move Google has made, as well, booting some 200 publishers off its ad network in the fourth quarter of 2017.

Overall, these are strong first steps, and the tech giant indicated that they’re exactly that: first steps. Facebook’s statement on the measures concluded, “It’s important to us that the stories you see on Facebook are authentic and meaningful. We’re excited about this progress, but we know there’s more to be done. We’re going to keep working on this problem for as long as it takes to get it right.”

Who is responsible for truth?

This turn toward better accepting the responsibilities inherent in the power of its platform is the right move for Facebook. The question now, however, is to what extent the public also picks up its share of the duty to make decisions based on truthful information. It is all too easy to share viral fake news, but even more critically, it’s easier than ever to seek only that information that confirms our own worldviews. Democracy is a challenging business. We have an obligation to make use of reliable information – important facts couched in their proper contexts.

If we fail to seek and to consume that information, Facebook’s fake news saga will mean very little indeed.

Kathleen Bartzen Culver is an assistant professor in the University of Wisconsin-Madison School of Journalism & Mass Communication and director of the Center for Journalism Ethics. Long interested in the implications of digital media on journalism and public interest communication, Culver focuses on the ethical dimensions of social tools, technological advances and networked information. She combines these interests with a background in law and free expression. She also serves as visiting faculty for the Poynter Institute for Media Studies and education curator for MediaShift.

UW-Madison’s Center for Journalism Ethics will tackle fake news and other critical topics at its March 31, 2017, conference: Truth, Trust and the Future of News. Visit go.wisc.edu/ethics2017 for more information on attending in person or participating via free video livestream.